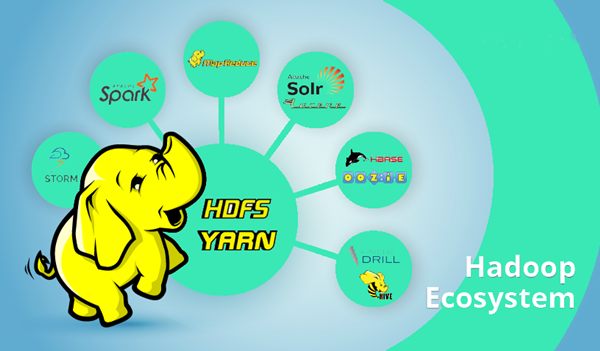

So, today let’s talk about what is big data and HADOOP?HADOOP is an open-source software framework that stores data and runs applications on different clusters of commodity hardware. HADOOP provides huge amount of storage for any type of data, massive processing power as well as the capability to handle almost innumerable simultaneous tasks or jobs. This big data HADOOP tutorial will explain more.

What is big data HADOOP and why is it so important?

The following are some of the reasons why HADOOP has gained so much importance:

HADOOP can store and process very big amounts of any type of data, very fast. Due to the constant increase of data volumes and data types, particularly from social media and the Internet of Things (IoT), this has now become a key factor.

What is Big Data HADOOP Tutorial for Beginners

Computing Capability:

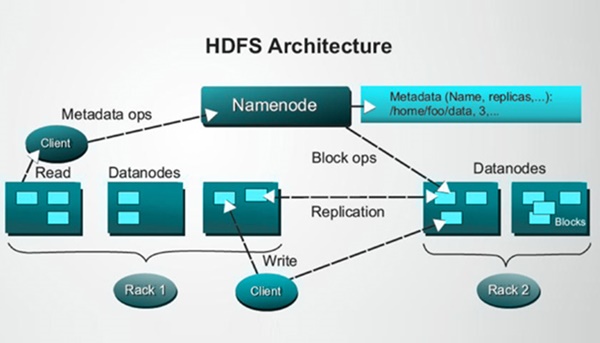

The distributed computing model followed by HADOOP is able to process big data at a very high speed. The processing power is directly proportional to the number of nodes you use.

Fault Tolerance:

Data and application processing has full protection against any kind of hardware fault or failure. In the event of a node being down or non-functional, the jobs are automatically redirected to other nodes so that the distributed computing does not stop.All data is stored in multiple copies automatically.

Flexibility:

In traditional relational databases you need to preprocess data before storing it. In HADOOP you don’t need to do it. You can store however much data you need and later make a decision on how to use that data. This is inclusive of unstructured data like text, images, and videos.

Low Cost:

The open-source framework is free and uses commodity hardware to store large quantities of data.

Scalability:

You can easily grow your system to handle more data simply by adding nodes. Little administration is required.

How is HADOOP Used?

As low-cost storage and data archive:

The reasonable cost of the hardware makes HADOOP ideal for storing and combining data. Because it costs less you can keep information that might not be critical at the moment but might be needed later.

Sandbox for Discovery and Analysis:

Since HADOOP was designed to deal with volumes of data in a different types, it is able to run analytical algorithms. These analytics can help your organization operate with greater efficiency, unearth new opportunities and figure out the next-level cutting edge Innovation is possible with very little investment.

Data Lake:

Data lakes support storing data preserving its original format. This is done to offer a raw or unrefined view of data to data scientists and analysts for discovery and analytics. It helps them to raise new queries.

Complement for Data Warehouse:

Some data sets are being offloaded from the data warehouse into HADOOP or new types of data are being sent directly to HADOOP. The ultimate objective for every organization is to have a correct platform for storing and processing data of various kinds to support different levels of use cases.

IoT and HADOOP:

In IoT things need to know what to communicate and when to act. The core of the IoT is a streaming, always on data torrent. HADOOP is often used as the data store for billions of transactions. Massive storage and processing capabilities allow HADOOP to function as a sandbox for discovery and analysis. You can then continuously improve on what you are building, because HADOOP is always being updated with new data that is a mismatch with previous patterns.

This big data HADOOP tutorial for beginners is mainly for getting an idea about big data and HADOOP. Once you know about big data and why it is used, you can find different uses for it. I hope this article has thrown some insights into this huge ocean. So, get going and I am sure you will learn as you progress and work on these things on a regular basis. All the best!